Now, the music industry has entered the ring.

The world of artificial intelligence is now at the centre of a fierce legal storm. But this fight isn’t about code, it’s about copyright.

Creators and corporations across industries are launching lawsuits that question how AI is trained. And the stakes couldn’t be higher.

These legal challenges are already shaking up the tech world.

They pit creators’ rights against the ambitions of AI developers.

As the cases pile up, it’s clear: the early disputes are over. This is now a full-scale global battle.

The Global Legal Battlefield

AI giants are being hit with a wave of copyright lawsuits across continents.

In the US, the pressure is mounting. News outlets and authors have filed multiple lawsuits against OpenAI and Microsoft.

Visual artists have targeted Stability AI and Midjourney.

One of the most high-profile cases comes from The New York Times. It has accused both companies of using its content without permission to train ChatGPT.

And it doesn’t stop there. Comedian and author Sarah Silverman is suing OpenAI and Meta for similar reasons. The creative world is mobilising.

Now, the music industry has entered the ring.

Major record labels are suing AI platforms Suno and Udio, claiming their recordings were used without consent. This widens the fight beyond text and visuals. AI’s legal reckoning is now reaching every corner of the creative economy.

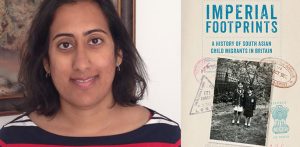

The UK is also a critical front. Getty Images has taken Stability AI to court in London. It’s the first major UK trial over AI and copyright.

What happens here will ripple far beyond Britain. As different legal systems tackle the issue, the outcomes will help define global norms.

The Heart of the Matter: Training Data

At the core of this legal clash is the way AI is trained.

Generative AI systems rely on massive datasets scraped from the internet. That data often includes copyrighted content.

AI firms argue this counts as “fair use” under US law. They say their systems transform the data, learning patterns like a human would. The results, they claim, are new and unique.

But creators say otherwise.

Fair use in the US is judged on four factors: purpose, nature, amount, and market effect.

Many argue that AI’s commercial use weighs against it. They also question the scale, millions of copyrighted works used without payment.

Rights holders say this isn’t fair use, it’s theft.

Getty Images, for instance, claims Stability AI took millions of its images without a license. Even worse, some AI-generated images still show Getty’s watermark.

That, they argue, proves the outputs aren’t truly new. This “fair use” debate could decide AI’s future.

Getty Images vs Stability AI

The Getty Images versus Stability AI lawsuit is one of the most important battles in this legal war. It’s unfolding in London’s High Court and drawing attention from around the world.

Getty, one of the biggest names in stock photography, accuses London-based startup Stability AI of infringement. It’s a David versus Goliath clash, with deep global implications.

Getty’s lawyers insist that AI and creative industries can work together. But they stress that licensing is essential.

Lindsay Lane KC, representing Getty, said in court:

“The problem is when AI companies such as Stability AI want to use those works without payment.”

Stability AI has denied the claims. Its lawyer, Hugo Cuddigan, said the case poses an “overt threat” to the entire AI sector.

He argued that very few outputs actually resemble Getty’s images. The company also disputes the UK court’s jurisdiction, claiming the model was trained on servers in the US.

With the UK keen to become an “AI superpower,” the outcome here could shape how the country balances innovation and intellectual property.

Hollywood’s Stand

Hollywood is now fully engaged in the copyright fight.

Media giants Disney and Universal have filed lawsuits against Midjourney, the AI image generator. This marks the first time major studios have taken legal action against a generative AI company.

The lawsuit, filed in Los Angeles federal court, accuses Midjourney of large-scale piracy.

The studios say the platform enables users to produce endless unauthorised images of iconic characters like Darth Vader and the Minions.

The complaint is blunt. It calls Midjourney a “quintessential copyright free-rider and a bottomless pit of plagiarism”.

Midjourney CEO David Holz has a different view. In a previous interview, he likened the platform to a search engine.

He asked: “Can a person look at somebody else’s picture and learn from it and make a similar picture?”

If the final images are different, he argued, “it seems like it’s fine”.

This case is now a new legal front. The entertainment industry is stepping in to protect its most valuable assets.

The Shifting Legal Landscape

The copyright battle over AI is far from over.

Most cases are still ongoing, with courts yet to reach final verdicts. But early decisions are giving a glimpse of where things might go.

In the Andersen versus Stability AI case, US judge William Orrick has shown scepticism about the artists’ claims. He suggested it’s hard to prove that AI outputs are close enough to the original training images.

On the other hand, a recent ruling offered some encouragement to rights holders.

A court granted a partial summary judgment in favour of Thomson Reuters in a case against Ross Intelligence.

That lawsuit centres on whether copyrighted works can legally be used to train AI systems. It may be one of the first cases to set a clear precedent.

New laws could also reshape the battlefield.

The EU’s AI Act, which took effect in August 2024, requires transparency from AI developers. Companies must follow copyright laws and disclose summaries of their training data.

Meanwhile, the UK is crafting its own approach. All eyes are on how these governments balance innovation with creators’ rights.

So, are the lawsuits against AI companies succeeding?

It’s not a simple yes or no. The legal picture is fragmented and still evolving. Both sides have seen partial wins and tough setbacks.

But the scale of the battle has changed.

With powerhouses like The New York Times, Getty Images, Disney and Universal now involved, the creators’ side has serious muscle.

These cases are about more than damages. They’re about reshaping the rules of the digital age.

At stake is the entire foundation of the AI business model. The world’s judges and lawmakers now face a huge responsibility. They must redraw the boundaries of intellectual property for the era of artificial intelligence.

Whatever happens next, the impact will be felt for decades.

These decisions will change how we create, share, and value content in a world powered by AI.

The future of both human creativity and machine innovation now hangs in the balance.