“Something like this is honestly, extremely scary"

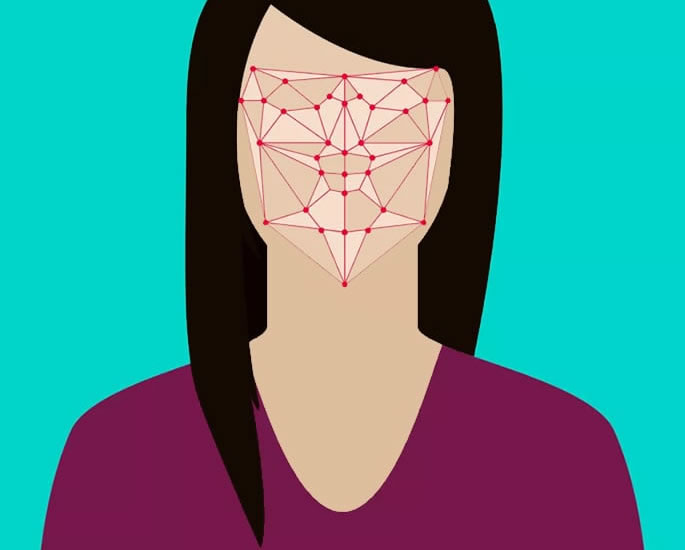

Social media is hugely popular in India but it also has a dark side and one prominent aspect is deepfakes.

Deepfakes are a subject that only surfaced on the web in 2017, especially on Reddit which later saw the website ban it.

The subject has become a dark corner of the internet, where people in the thousands have gathered to share fake videos of celebrity women having sex.

According to a 2023 State of Deepfakes report from Home Security Heroes, the number of online deepfakes have spiked by 550% to 95,820 as compared to 2019.

India is the sixth most vulnerable country.

There is a separate category that is made up of morphed videos and images of Bollywood actresses.

Pornographic scenes featuring the faces of Deepika Padukone and Priyanka Chopra are common.

Deepfakes were typically unsophisticated, with many being able to tell that they were fake.

Satnam Narang, a threat researcher at Tenable, says:

“So far, the advancement of generative AI has not yet had an impact on the world of deepfakes.

“We’re still seeing rudimentary deepfakes being used to scam victims out of money as part of cryptocurrency scams.

“However, once generative AI adoption occurs in this space, it will make it that much harder for users to distinguish between deep fake and non-deep fake-generated content.”

But with photo and video editing tools becoming easy to use and along with AI, the process of doctoring an image or a video has become easier and more sophisticated.

Deepfakes of Rashmika Mandanna and Katrina Kaif have recently been in the limelight but are just the tip of the iceberg.

The underlying problem is much bigger.

Rashmika’s Case

A video went viral, showing a woman entering a lift in a low-cut unitard.

Rashmika Mandanna‘s face had been superimposed on the woman.

The woman was actually Zara Patel, a British woman with 400,000 Instagram followers.

Rashmika broke her silence on the matter, saying:

“Something like this is honestly, extremely scary not only for me, but also for each one of us who today is vulnerable to so much harm because of how technology is being misused.

“Today, as a woman and as an actor, I am thankful for my family, friends and well-wishers who are my protection and support system.

“But if this happened to me when I was in school or college, I genuinely can’t imagine how could I ever tackle this.

“We need to address this as a community and with urgency before more of us are affected by such identity theft.”

Zara Patel said: “Hi all, it has come to my attention that someone created a deepfake video using my body and a popular Bollywood actress’ face.

“I had no involvement with the deepfake video, and I’m deeply disturbed and upset by what is happening.

“I worry about the future of women and girls who now have to fear even more about putting themselves on social media.

“Please take a step back and fact-check what you see on the internet. Not everything on the internet is real.”

Katrina’s Case

Just days after the fake video of Rashmika Mandanna went viral, Katrina Kaif also fell victim to deepfaking.

She had shared a behind-the-scenes picture of herself from Tiger 3.

The image is part of Katrina’s fight sequence with Michelle Lee, where both are wearing just towels.

The deepfake featured Katrina without the towel. Instead, the actress was dressed in a revealing white two-piece.

Her body had also been edited, which included her curves becoming noticeably more ample.

The fake image showed Katrina’s hands placed on them for a more sensual pose.

Since both cases came to light, Sonnalli Seygall has revealed that she suffered a similar experience.

She admitted: “Yes, it has happened to me in the past but not in a video, in the form of pictures. And it was very, very scary then.

“Actually, my mom brought it to my notice and my mom is very gullible and at least at that point when it was also new, she did not understand. It really affected her.

“She said what are these pictures of yours? And I was like they are not really mom, they are morphed.”

“So it’s very sad. It’s scary and makes me angry.

“It’s completely illegal and just because they’re faceless people doing this does not make it okay by any standards.”

A Much Bigger Issue

While Rashmika Mandanna and Katrina Kaif’s deepfake ordeals are horrific, they are just a small part of a much bigger issue.

Countless Indian women have had their edited images and deepfaked videos leaked online.

Although the Indian Government has said the punishment for posting or distributing such content is three years imprisonment and a Rs. 1 Lakh (£980) fine, there are issues with this.

Firstly, getting someone to register a complaint is a task. Even if a complaint is registered, arrests are rarely made.

Typically, the perpetrator goes unpunished.

Instead, the Indian authorities threaten social media platforms with fines and jail time for their executives until the post is removed.

This works at times but at best, it is a temporary solution.

When it comes to social media sites allowing such posts, most platforms have no method to filter out deepfake content after it is posted.

Content moderation is time-consuming and expensive.

Most companies are working on mechanisms that will help them flag such content. But these processes will largely depend on AI, which can be easily faked.

Does India have a Law against Deepfakes?

In response to the Rashmika Mandanna deepfake, the government issued a reminder to social media platforms about deepfakes and the applicable penalties.

The government cited Section 66D of the Information Technology Act, 2020, which relates to “punishment for cheating by personation by using computer resource”.

The section stated: “Whoever, by means for any communication device or computer resource cheats by personating, shall be punished with imprisonment of either description for a term which may extend to three years and shall also be liable to fine which may extend to one lakh rupees.”

Union Minister of State for Electronics and Information Technology Rajeev Chandrasekhar said Prime Minister Narendra Modi was committed to ensuring safety and trust for Indians in the digital space.

He tweeted: “Under the IT rules notified in April 2023, it is a legal obligation for platforms to:

- ensure no misinformation is posted by any user.

- ensure that when reported by any user or govt, misinformation is removed in 36 hrs.

- if platforms do not comply with this, rule 7 will apply and platforms can be taken to court by the aggrieved person under provisions of IPC.

He added:

“Deepfakes are the latest and even more dangerous and damaging form of misinformation and need to be dealt with by platforms.”

But deepfake porn is a massive concern.

While some of the existing laws in India can be applied to counter deepfakes, there is a growing need for specific legislation to tackle the challenges posed by such content.

Radhika Roy, advocate and associate legal counsel at Internet Freedom Foundation (IFF), said the term “deepfake” has not been defined explicitly in any statute.

But there are legal provisions to tackle it, such as Section 67 of the IT Act, “which can be used for publishing obscene material in electronic form”.

She said: “There needs to be clearer explanations with clearer consequences when it comes to deepfakes, given their insidious nature and how it’s sometimes impossible to tell what’s real from what’s fake.”

What Needs to be Done?

What India needs is a set of enforceable laws.

The government needs to look at countries like Singapore and China, where people have been prosecuted for posting deepfakes.

The Cyberspace Administration of China (CAC) introduced comprehensive legislation designed to regulate the dissemination of deepfake content.

This prohibits the creation and distribution of deepfakes generated without the consent of individuals, necessitating the implementation of specific identification measures for content produced through artificial intelligence.

In Singapore, the Protection from Online Falsehoods and Manipulation Act (POFMAN) serves as a legal framework that forbids deepfake videos.

Similarly, South Korea mandates that AI-generated content and edited videos and photos such as deepfakes be labelled as such on social media platforms.

Since such laws are yet to be introduced in India, existing laws within Sections 67 and 67A of the Information Technology Act (2000) can be used.

Elements of these sections about the publication or transmission of obscene material in electronic form and related activities may be applied to protect the rights of deepfake victims.

Although deepfaking is not a new thing, instances involving Rashmika Mandanna and Katrina Kaif have thrown the subject into the spotlight.

Deepfakes are a scary thing, both for the victim and in terms of spreading misinformation.

And with the rise of AI, deepfakes will become even more realistic, spelling danger for potential victims.

It is up to the Indian government to take measures in order to crack down on the production and distribution of deepfakes.