“Our creative industries face a clear and present danger"

A report has warned that generative AI could undermine copyright protections for creators if the UK government fails to act.

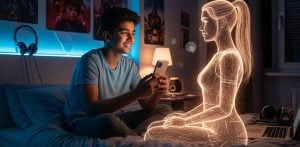

Generative AI systems can now produce imitations of creative material within seconds. These systems rely on training models using vast quantities of human-created content, often without explicit consent or payment.

The House of Lords Communications and Digital Committee said the issue is not that the UK’s copyright framework is outdated or requires reform.

Instead, widespread unlicensed use of protected works and limited transparency from AI developers leave rightsholders uncertain about whether their content has been used.

This lack of clarity also makes it difficult for creators to enforce their rights when their work has been included in training data.

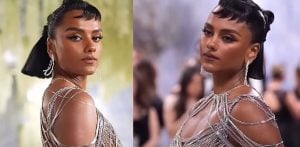

The report also highlights a legal gap in the UK. The absence of a robust “personality right” or specific protection for digital likeness means creators and performers cannot easily challenge AI outputs that imitate their distinctive style, voice or persona.

The committee argues that the government should focus on responsible AI development built on licensing rather than weakening copyright protections in pursuit of technological gains.

Baroness Keeley, chair of the committee, said:

“Our creative industries face a clear and present danger from uncredited and unremunerated use of copyrighted material to train AI models.

“Photographers, musicians, authors and publishers are seeing their work fed into AI models which then produce imitations that take employment and earning opportunities from the original creators.

“AI may contribute to our future economic growth, but the UK creative industries create jobs and economic value now.

“In 2023, the creative industries delivered £124 billion of economic value to the UK and this is set to grow to £141 billion by 2030.

“Watering down the protections in our existing copyright regime to lure the biggest US tech companies is a race to the bottom that does not serve UK interests.

“We should not sacrifice our creative industries for AI jam tomorrow.”

The committee is calling on the government to rule out a new commercial text and data mining exception that would allow AI developers to train models on copyrighted material unless rightsholders opt out.

Peers said mixed public messaging and an extended consultation period have already undermined trust and delayed licensing and investment in the sector.

They urged the government to publish a final decision on its approach to AI and copyright within the next year. In the meantime, ministers should clearly confirm that a text and data mining exception with an opt-out mechanism will not be introduced.

The report also recommends new protections for identity, style and digital replicas. Creators and performers should have clear control over the commercial use of their identity and the ability to challenge unauthorised digital replicas or “in the style of” AI outputs.

Transparency about AI training data is another priority. The committee recommends establishing a mandatory transparency framework requiring UK AI developers to disclose how their models are trained.

It also suggests using public procurement and regulatory tools to encourage international developers to comply with UK transparency requirements.

Peers believe these measures could support the development of a fair licensing market for AI training data.

The report says the government should support this market in a way that works for both AI developers and rightsholders of different sizes.

It also recommends backing technical tools that support a licensing-first approach, including globally aligned standards for rights reservation, data provenance and labelling of AI-generated content.

The committee also highlights the importance of developing sovereign AI models.

Domestically governed systems could offer an alternative to reliance on opaque models developed by major US technology companies.

According to the report, the government’s sovereign AI strategy should prioritise models that provide greater transparency and respect for copyright protections.

Baroness Keeley added: “The Government should now make clear it will not pursue a new text and data mining exception with an opt-out mechanism for training commercial AI models.

“Instead, it should focus on strengthening UK protections for creators, including against unauthorised digital replicas and ‘in the style of’ uses of creators’ work and identity.

“The Government’s task should be to create the conditions that will allow a licensing-first approach to AI training to flourish, backed by effective transparency requirements and technical standards for data provenance and labelling, so that rightsholders and developers can participate confidently in this emerging market.

“The future for AI in the UK should be based on transparent and responsible use of training data.

“We are calling on the Government to embrace the opportunities this presents, and to demonstrate its commitment to the UK’s gold-standard copyright regime and our outstanding creative industries in its forthcoming economic assessment and update on AI and copyright.”