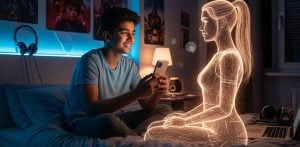

"this means underage children can easily access services"

Major technology companies have been urged to introduce more robust age checks for under-13s in the UK, similar to those currently in place for services designed for adults.

The request comes from media regulator Ofcom and the Information Commissioner’s Office (ICO).

The companies contacted include Facebook, Instagram, Snapchat, TikTok, YouTube, Roblox and X.

Both regulators said the firms must do more to prevent underage children from signing up and using services not designed for them.

Ofcom Chief Executive Melanie Dawes said services were currently “failing to put children’s safety at the heart of their products”.

Currently, many platforms rely on users self-reporting their age when signing up.

However, regulators say this method is easily bypassed and allows children under 13 to access social media.

In an open letter, the ICO said: “As self-declaration is easily circumvented, this means underage children can easily access services that have not been designed for them.”

Most major social media platforms set a minimum age of 13. But Ofcom research suggests 86% of children aged 10 to 12 have their own social media profile.

The regulators are calling for “highly-effective age checks”, similar to those already required for certain online services that host adult content.

Under current UK law, such checks are mainly required for services offering over-18 content such as pornography.

Applying similar methods to social media platforms would require technology companies to voluntarily introduce stronger verification systems for younger users.

The ICO’s concerns also focus on how platforms process children’s personal data.

The letter added: “Where services have set a minimum age – such as 13 – they generally have no lawful basis for processing the personal data of children under that age on their service.”

Technology Secretary Liz Kendall backed the regulators’ push for stronger safeguards. She said no platform would receive special treatment when it comes to protecting young users online.

She added: “No company should need a court order to act responsibly to protect children.”

Several technology firms defended the safety measures already in place on their platforms.

YouTube said it was surprised by Ofcom’s position and questioned the regulator’s approach.

Meta said it had already implemented several of the suggestions raised by Ofcom, “including using AI to detect users’ age based on their activity, and facial age estimation technology”.

The company added that bringing age verification for app stores would mean “parents and teens will only need to provide their personal information once.”

Snapchat said it is currently testing new age verification tools.

TikTok said it uses “enhanced technologies” to detect and remove accounts belonging to children under 13.

The company added that it publishes transparency reports on underage accounts removed from the platform.

TikTok said it removed more than 90 million suspected under-13 accounts between October 2024 and September 2025.

Gaming platform Roblox said it had introduced additional safety protections for younger users.

The company reported releasing 140 new safety features over the past year. These include “the introduction of new mandatory age checks that all players must complete in order to access chat features”.

A Roblox spokesperson added that they “look forward to demonstrating our efforts in our ongoing dialogue with Ofcom”.

Experts say the regulators’ intervention highlights growing concern over children’s safety online.

Professor Amy Orben, a digital mental health expert at Cambridge University, welcomed the action but warned it must be followed by stronger enforcement.

She said: “Safety must be built into products by design rather than treated as an afterthought, with regulators showing more strength in holding companies to account.”

Social media analyst Matt Navarra said: “Knowing a user is a child is step one but designing a platform that doesn’t exploit their attention is the next step – and that step is actually much harder”.