“I don’t think that’s going to continue.”

Artificial Intelligence (AI) has rapidly evolved in recent years and this has prompted concerns about the potential dangers of AI.

It has brought about significant advancements and transformative capabilities in various fields.

From autonomous vehicles to virtual assistants, AI has demonstrated its potential to revolutionise industries and enhance human experiences.

But as the power and influence of AI continue to expand, it becomes crucial to address and understand the potential dangers associated with this technology.

Tesla boss Elon Musk once said:

“[AI] scares the hell out of me.

“It’s capable of vastly more than almost anyone knows, and the rate of improvement is exponential.”

Whether it’s the increasing automation of certain jobs, gender and racially-biased algorithms or autonomous weapons that operate without human oversight (to name just a few), unease abounds on a number of fronts.

And we’re still in the very early stages of what AI is really capable of.

We look at the potential dangers of AI and certain aspects it could affect.

Loss of Jobs & Careers

One of the biggest dangers of AI is the loss of jobs and careers.

The technology has been adopted in various industries such as marketing, manufacturing and healthcare.

Between 2020 and 2025, around 85 million jobs are expected to be lost.

According to Greg Jackson, CEO of Octopus Energy, AI is doing the work of 250 people at the company.

He said the technology has been incorporated into company systems and staff began letting it reply to some customer emails in February 2023.

Futurist Martin Ford said: “The reason we have a low unemployment rate, which doesn’t actually capture people that aren’t looking for work, is largely that lower-wage service sector jobs have been pretty robustly created by this economy.

“I don’t think that’s going to continue.”

As AI robots become smarter, the same tasks will require fewer humans.

While AI will create 97 million new jobs by 2025, many works will not have the skills needed for these technical roles and could get left behind if companies do not upskill their workforces.

Ford said: “If you’re flipping burgers at McDonald’s and more automation comes in, is one of these new jobs going to be a good match for you?

“Or is it likely that the new job requires lots of education or training or maybe even intrinsic talents — really strong interpersonal skills or creativity — that you might not have?

“Because those are the things that, at least so far, computers are not very good at.”

Even jobs that require graduate degrees and additional post-college training aren’t immune to AI displacement.

This has already been seen in the healthcare industry.

In the US, healthcare organisations are producing clinician messages with the help of artificial intelligence and some patients are not being told about it.

Technology strategist Chris Messina stated that law and accounting are next.

In regards to the legal field, he said:

“Think about the complexity of contracts, and really diving in and understanding what it takes to create a perfect deal structure.

“It’s a lot of attorneys reading through a lot of information – hundreds or thousands of pages of data and documents. It’s really easy to miss things.

“So AI that has the ability to comb through and comprehensively deliver the best possible contract for the outcome you’re trying to achieve is probably going to replace a lot of corporate attorneys.”

Impact on the Creative & Arts Sector

In the creative and arts sector, AI is becoming increasingly prominent and is receiving a mixed reception.

For example, smaller clothing sellers who model their own products online are increasingly replacing themselves with AI models.

Tracy Porter has been searching for ways to replace herself as a model, not only to lighten the load but to bring some diversity to the site.

She has been testing a new service from Israeli startup Botika.

She said the images look so realistic that when she showed her sons, they told her she was out of a job. But Tracy is excited about not having to model her products anymore.

On the other hand, Levi’s faced backlash in March 2023 for its plan to bring in AI-generated models.

Critics slammed the company for not hiring more humans.

It would be no surprise if big fashion retailers have become hesitant about using non-human models.

The same public disapproval cannot be said for smaller companies, whose budgets would collapse under the costs of professional hair, makeup and photography – even without inflation, rising borrowing costs and a recession that feels as if it’s been looming forever.

Privacy

There is also concern that AI will adversely affect privacy and security.

One example is China’s use of facial recognition technology in offices, schools and other venues.

In addition to tracking a person’s movements, the Chinese government may be able to gather enough data to monitor a person’s activities, relationships and political views.

Another example is US police departments embracing predictive policing algorithms to anticipate when crimes will happen.

But these algorithms are influenced by arrest rates, which disproportionately impact Black communities.

This becomes a problem because police departments then double down on these communities, leading to over-policing and questions over whether self-proclaimed democracies can resist turning AI into an authoritarian weapon.

Ford added: “Authoritarian regimes use or are going to use it.

“The question is, How much does it invade Western countries, democracies, and what constraints do we put on it?”

Copyright

When it comes to AI-generated content, copyright is a big topic of discussion.

In the music world, a creator made an AI-generated track by The Weeknd and Drake called ‘Heart on my Sleeve’.

It racked up millions of streams before Spotify, Apple Music, YouTube and TikTok removed it.

Universal Music Group sent a letter to streaming services, asking them to block AI software from using their platforms to train its generative AI.

But the legality of AI music is still unclear as the US Copyright Office released new guidelines on how to register music and other art forms made with AI.

The guidelines require the disclosure of the inclusion of AI-generated content within work submitted for registration.

The US Copyright Office will consider whether the use of AI in songs is “the result of ‘mechanical reproduction’ or instead of an author’s own original mental conception”.

But some artists have embraced AI.

At one of his shows, David Guetta played an EDM track with an AI-generated Eminem “rapping” over the beat.

Meanwhile, singer Grimes announced she would allow anyone to use AI to produce songs using her voice as long as she gets a 50% split of the royalties.

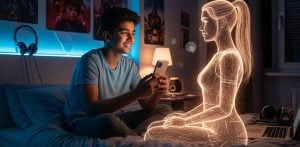

Deepfakes

Deepfake videos and images have also seen a boom online, showing influential figures relaying misinformation.

Meta CEO Mark Zuckerberg was used in a clip where he thanked Democrats for their ‘service and inaction’ on antitrust legislation.

Demand Progress Action’s advocacy group made the video, which used deepfake technology to turn an actor into Zuckerberg.

Another example was Ferdinand Marcos Jr wielding a TikTok troll army to capture the votes of younger Filipinos during the 2022 election.

TikTok runs on an AI algorithm that saturates a user’s feed with content related to previous media they’ve viewed on the platform.

Criticism of the app targets this process and the algorithm’s failure to filter out harmful and inaccurate content, raising doubts over TikTok’s ability to protect its users from dangerous and misleading media.

The world of deepfakes is also particularly concerning, especially among women, who face the possibility of their images being edited into porn clips.

In February 2023, several female Twitch stars discovered their images on a deepfake porn website earlier this month, where they were seen engaging in sex acts.

As AI continues to advance, it makes it harder to distinguish what is real and what is not, which can be detrimental to one’s reputation.

Ford says:

“No one knows what’s real and what’s not.”

“So it really leads to a situation where you literally cannot believe your own eyes and ears; you can’t rely on what, historically, we’ve considered to be the best possible evidence… That’s going to be a huge issue.”

Currently, no laws protect humans from being generated into a digital form by AI.

AI Bias

Various forms of AI bias are also detrimental.

Princeton computer science professor Olga Russakovsky said AI bias goes well beyond gender and race.

In addition to data and algorithmic bias , AI is developed by humans — and humans are inherently biased.

Russakovsky said: “AI researchers are primarily people who are male, who come from certain racial demographics, who grew up in high socioeconomic areas, primarily people without disabilities.

“We’re a fairly homogeneous population, so it’s a challenge to think broadly about world issues.”

The limited experiences of AI creators may be the reason why speech-recognition AI often fails to understand certain dialects and accents or why companies fail to consider the consequences of a chatbot impersonating notorious figures in human history.

Developers and businesses should exercise greater care to avoid recreating powerful biases and prejudices that put minority populations at risk.

As AI continues to permeate various aspects of our lives, it is essential to recognise and mitigate the potential dangers it poses.

Addressing biases, ensuring ethical decision-making, safeguarding privacy and security and addressing socio-economic impacts are critical steps towards responsible and beneficial AI development.

By proactively engaging in discussions and implementing appropriate regulations and safeguards, we can harness the potential of AI while minimising its risks and ensuring a safer, more equitable future.