"This clumsy announcement is fraught with risk"

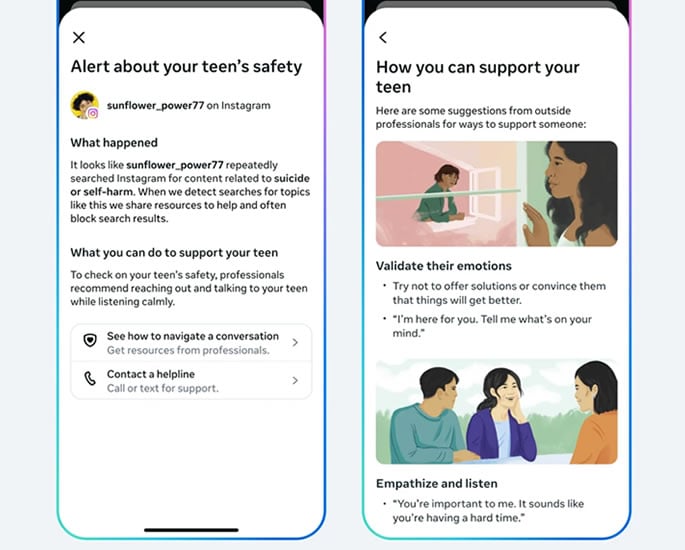

Parents using Instagram’s child supervision tools will soon receive alerts if their teenager repeatedly searches for suicide or self-harm content on the platform.

It is the first time parent company Meta will actively inform parents about harmful search activity, rather than simply blocking results and directing young users to external help.

The feature will launch next week for families using Instagram’s Teen Accounts in the UK, US, Australia and Canada. Other countries will be added later.

Meta said alerts will be triggered when its systems detect repeated searches for suicide or self-harm terms within a short timeframe on Instagram.

Parents will receive notifications by email, text message, WhatsApp or within the Instagram app, depending on the contact information linked to the account.

The company said each alert will include expert guidance to help parents approach what may be a sensitive conversation. It added that the system may sometimes flag searches where there is no serious risk and will “err on the side of caution”.

However, the announcement has drawn criticism from the Molly Rose Foundation.

Chief executive Andy Burrows said: “This clumsy announcement is fraught with risk and we are concerned that forced disclosures could do more harm than good.”

The foundation was set up by the family of Molly Russell, who died in 2017 aged 14 after viewing suicide and self-harm material on platforms including Instagram.

Burrows said: “Every parent would want to know if their child is struggling, but these flimsy notifications will leave parents panicked and ill-prepared to have the sensitive and difficult conversations that will follow.”

Several charities said the move highlights wider concerns about harmful material remaining accessible to young users.

Ged Flynn, chief executive of Papyrus Prevention of Young Suicide, said the charity welcomed steps to involve parents but warned the underlying problem persisted.

He said Meta was “neglecting the real issue that children and young people continue to be sucked into a dark and dangerous online world”.

He added: “Parents contact us every day to say how worried they are about their children online.

“They don’t want to be warned after their children search for harmful content; they don’t want it to be spoon-fed to them by unthinking algorithms.”

Leanda Barrington-Leach, executive director of 5Rights Foundation, said:

“If Meta is to take child safety seriously, it needs to return to the drawing board and make its systems age-appropriate by design and default.”

Burrows also referred to research published by the Molly Rose Foundation last September, which found Instagram still “actively” recommends material about depression, suicide and self-harm to “vulnerable young people”.

He added: “The onus should be on addressing these risks rather than making yet another cynically timed announcement that passes the buck to parents.”

Meta disputed the findings at the time, saying the research “misrepresents our efforts to empower parents and protect teens”.

Instagram said it plans to extend similar alerts in the coming months if teenagers discuss suicide or self-harm with its AI chatbot, noting that young people are “increasingly turn to AI for support”.

The changes come as governments intensify scrutiny of social media companies’ approach to child safety.

At the beginning of 2026, Australia introduced a ban on social media use for under-16s. Spain, France and the United Kingdom are considering comparable measures.

Meta chief executive Mark Zuckerberg and Instagram head Adam Mosseri recently appeared in court in the United States to defend the company against allegations it targeted younger users.