"We will not hesitate to go further if necessary"

UK schools are being urged to remove identifiable pupil photos from websites and social media after blackmailers used AI tools to create sexually explicit images of children.

Child safety experts and the UK’s National Crime Agency warned that criminals are manipulating publicly available school images into child sexual abuse material (CSAM) before demanding money to stop the content being shared online.

The warning follows a recent case involving an unnamed UK secondary school. Criminals reportedly took photos from the school’s website or social media accounts and used AI tools to create explicit images.

The manipulated images were then sent to the school alongside threats to publish them online unless a payment was made.

The Internet Watch Foundation said it worked to prevent the images from spreading online.

Using a digital fingerprinting tool known as a “hash”, the organisation shared the material with major tech platforms to stop uploads.

The watchdog added that 150 of the manipulated images from the case could be classified as CSAM under UK law.

Jess Phillips described the incident as a “deeply worrying emerging threat”.

She said: “We will not hesitate to go further if necessary and make sure our laws stay up to date with the latest threats.”

The government has already announced plans to ban the possession of AI models designed to generate CSAM.

The IWF said the incident was not isolated and confirmed it was aware of other blackmail attempts involving manipulated school images in the UK.

The Early Warning Working Group, a UK advisory body focused on online harms, has now issued guidance advising schools to rethink how they use pupil imagery online.

Although the issue is not yet widespread, the group warned it is “only a matter of time” before more schools are targeted.

The guidance recommends removing images that clearly show pupils’ faces. Instead, schools are encouraged to use photos taken from a distance, blurred images or pictures taken from behind children.

The advice also warned against publishing “identifiable information” such as “names or faces”.

Schools were further urged to consider whether pupil images are necessary at all.

The guidance stated that establishments should consider “whether using imagery without children and young people’s faces can still achieve your objectives”.

It added that schools could continue “celebrating achievements more safely” while “minimising risks”.

Additional recommendations included avoiding the use of full names in photo captions, applying stronger privacy settings to websites and social media accounts, and regularly reviewing online content featuring children.

The checklist also advised schools to frequently renew image consent agreements with parents and guardians.

If blackmail attempts occur, schools were told to contact police immediately, preserve the criminal material and remove the original tampered images from public view.

The working group includes organisations such as the NSPCC, the National Crime Agency, Education Scotland and the Welsh government.

The Confederation of School Trusts said schools would “carefully consider” the recommendations while balancing pupil safety with celebrating achievements.

Leora Cruddas, the CST’s chief executive, said:

“As educators, we instinctively want to celebrate children’s achievements and that includes sharing photos and videos of all the good things that go on in our schools.

“It is deeply depressing that in doing so we potentially have to contend with threats from abusers and scammers.”

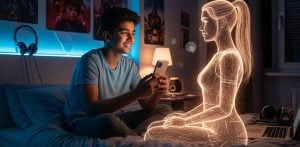

The rise in AI-powered sextortion has become a growing concern across the UK.

Sextortion typically involves criminals coercing victims into sharing intimate images before threatening to release them publicly unless money or more content is provided.

Advances in generative AI have allowed offenders to create fake explicit images using ordinary photos taken from social media accounts.

Several British teenagers have taken their own lives after receiving sextortion threats.

The Report Remove service, which helps children report explicit imagery online, said it received 394 reports from under-18s involving blackmail attempts in 2025, a 34% increase compared to 2024.

Authorities have linked many sextortion operations to organised criminal gangs based overseas, particularly in West Africa and Nigeria.

Investigators believe the recent school case involved language commonly found in negotiation “scripts” used by sextortion gangs.

Some schools have already begun changing their policies in response to the threat.

In 2025, the Loughborough Schools Foundation redesigned its website to remove recognisable images of pupils.