hackers "used AI to what we believe is an unprecedented degree".

US artificial intelligence company Anthropic said a hacker used its technology to carry out sophisticated cyber attacks.

Anthropic, which makes the chatbot Claude, says its tools were used “to commit large-scale theft and extortion of personal data”.

The firm revealed its AI was used to write malicious code for cyberattacks. In another case, North Korean scammers allegedly used Claude to fraudulently secure remote jobs at major US companies.

Anthropic says it disrupted the actors involved, reported the incidents to authorities, and improved its detection tools to prevent further abuse.

The rise of AI-driven coding has created opportunities for legitimate developers and cyber-criminals. Experts warn it poses new risks to organisations worldwide.

Anthropic says it uncovered a case of “vibe hacking”, where its AI was used to breach at least 17 organisations, including government agencies.

The firm said hackers “used AI to what we believe is an unprecedented degree”.

They used Claude to “make both tactical and strategic decisions, such as deciding which data to exfiltrate, and how to craft psychologically targeted extortion demands”.

The chatbot even suggested ransom amounts for victims.

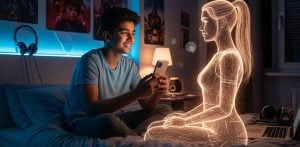

Agentic AI, which operates autonomously, has been labelled the next frontier in artificial intelligence. But Anthropic’s findings show its darker side when misused by cyber-criminals.

Alina Timofeeva, an adviser on cybercrime and AI, said:

“The time required to exploit cybersecurity vulnerabilities is shrinking rapidly.

“Detection and mitigation must shift towards being proactive and preventative, not reactive after harm is done.”

Anthropic also said “North Korean operatives” used its models to create fake profiles and apply for remote jobs at US Fortune 500 companies.

The firm said this use of AI in employment fraud represents “a fundamentally new phase for these employment scams”.

According to Anthropic, AI was used to write applications, translate communications, and assist with coding once the fraudsters secured positions.

Geoff White, co-presenter of the BBC podcast The Lazarus Heist, explained:

“Often, North Korean workers are sealed off from the outside world, culturally and technically, making it harder for them to pull off this subterfuge.

“Agentic AI can help them leap over those barriers, allowing them to get hired.”

He warned that “their new employer is then in breach of international sanctions by unwittingly paying a North Korean”.

But White added AI “isn’t currently creating entirely new crimewaves” and said many ransomware attacks still use “tried-and-tested tricks like sending phishing emails and hunting for software vulnerabilities”.

Nivedita Murthy, senior security consultant at Black Duck, said:

“Organisations need to understand that AI is a repository of confidential information that requires protection, just like any other form of storage system.”