“Yes, they can interact in NSFW mode"

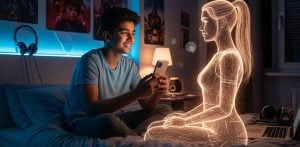

Grok, the Elon Musk-backed chatbot from xAI, quietly rolled out a new feature allowing users to interact with animated AI avatars capable of NSFW (not safe for work) conversations.

Dubbed “Companions”, the feature includes characters like Ani, a goth anime girl, and Rudy, a cartoon red panda, both of whom can now engage users in sexually suggestive roleplay.

Access to the feature is currently limited to SuperGrok subscribers, though some free users reported gaining access.

The characters can be toggled on in the app’s settings, with Musk describing the current rollout as a “soft launch”.

He added: “We will make this easier to turn on in a few days.”

The avatars respond with facial expressions and animated gestures.

Ani, in particular, has drawn attention for her lingerie-clad “NSFW” mode.

It is reported that this mode becomes available after building a virtual relationship with the character, as one user explained:

“Yes, they can interact in NSFW mode, though you have to reach a certain relationship level with the bot before it’ll go to the next step.”

Grok’s official description of the update reads: “Companions is a new feature for SuperGrok subscribers, providing interactive 3D animated AI personas like Ani (a goth anime girl) and Bad Rudy.

“Enable it in settings to chat; they react with movements, expressions, and can handle NSFW modes. Fun way to engage with AI!”

Users have also discovered a new character, “Chad”, in development.

The app’s existing voice mode already lets users converse with a faceless version of Grok, complete with an NSFW toggle.

The Companions feature builds on this with avatars designed to simulate intimacy through both text and animation.

The launch comes just a week after Grok was criticised for posting antisemitic content, which xAI blamed on a coding update.

SuperGrok now has two new companions for you, say hello to Ani and Rudy! pic.twitter.com/SRrV6T0MGT

— DogeDesigner (@cb_doge) July 14, 2025

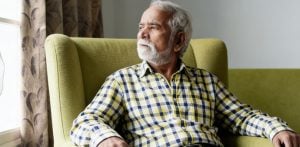

Now, the introduction of sexually interactive AI bots has sparked further concern over the chatbot’s direction, particularly its potential impact on mental health.

Studies have warned of the risks posed by AI companions that simulate romantic or sexual relationships.

One analysis concluded: “AI girlfriends can perpetuate loneliness because they dissuade users from entering into real-life relationships, alienate them from others, and, in some cases, induce intense feelings of abandonment.”

Despite these warnings, Musk has teased even more extreme possibilities.

He suggested future updates could let users “make these AI bots real,” referring to his yet-to-be-delivered Optimus humanoid robots.

While such a promise remains far-fetched, experts warn that the illusion of intimacy could lead to emotional harm, particularly among isolated or vulnerable users.

Still, xAI seems unfazed.

The Companions feature reflects a broader trend in the AI industry, where generative tools are increasingly being used to simulate relationships and sexual experiences.

But in this case, it’s being delivered through a platform with massive reach and minimal oversight.